SG50 was a collaboration with my PhD student, Suranga Nanayakkara, and architect Thomas Schroepfer. A map of Singapore comprised of over 2000 LEDs floated on the Singapore river. When passers-by went to our website on their mobile phones, they would enter their home postal code causing a light "splash" at that location on the floating map, and a tone pattern specific to where they live would play on their device. A crowd of people thus create a celebratory "symphony" of neighborhoods from whence they came. This work uses the same mobile networked synthesizer platform as Vocal Trak.

Vocal Track (2017). Collaborators: Kerem Göksel, Lonce Wyse, Vidya Rangasayee

Vocal Trak was composed for the Stanford Laptop Orchestra during a 2017 sabbatical at CCRMA. The sound is spatialized across speakers and audience mobile devices, and thhe audience has shared control over the the sounds their devices synthesize. Sounds are drawn from human and animal vocalisations, are transformed and cross-synthesized with neural network style-transfer techniqes. Performance interface include the 3D "Game Trak" string controller, voice, and phones. The mobile networked synthesizer was developed for pieces of this sort.

(2018) Real-valued parametric conditioning of an RNN for real-time interactive sound synthesis. Proceeding of the MUME workshop at the International Conference on Computational Creativity. Salamanca, June 2018.

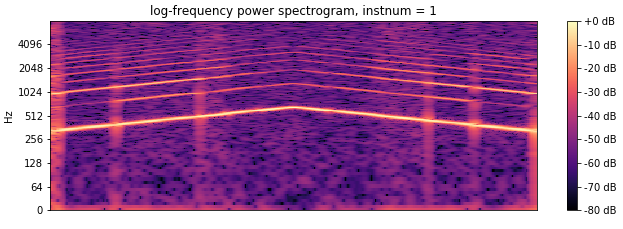

A deep-learning Recurrent Neural Network (RNN) for audio synthesis is trained by augmenting the audio input with information about signal characteristics such as pitch, and amplitude. The result after training is an audio synthesizer that is played like a musical instrument with the desired musical characteristics provided as real-time parametric control.

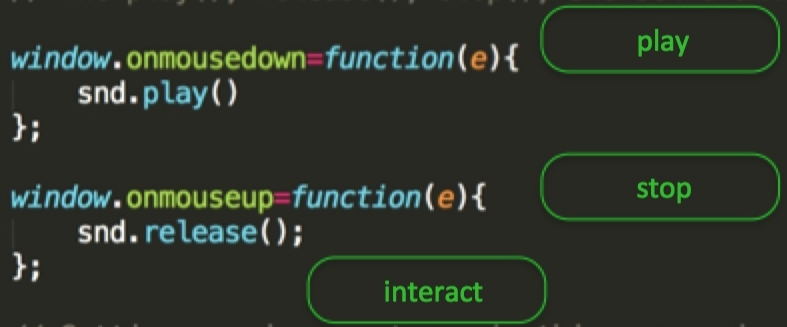

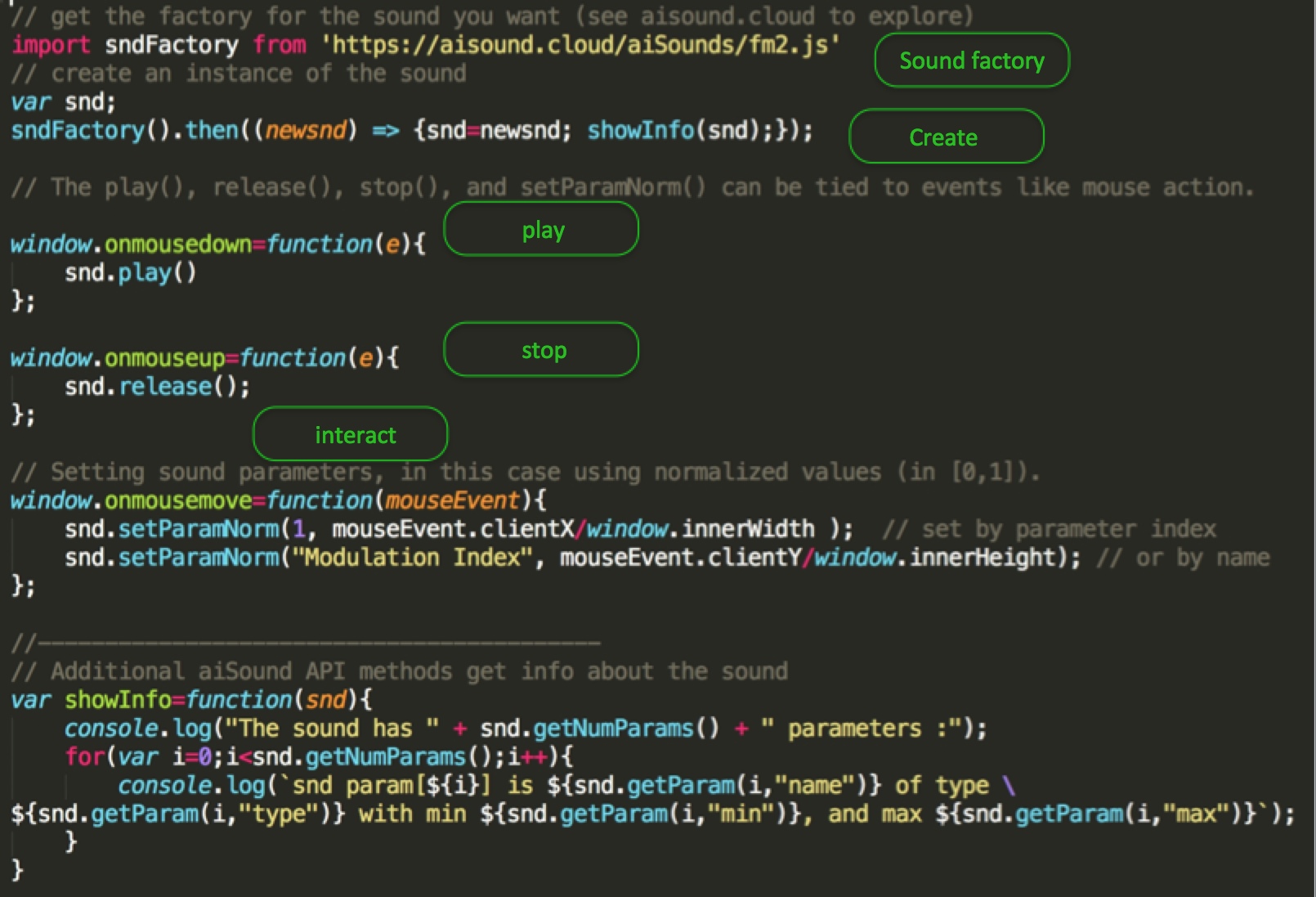

aiSound is a library for building sound models with the WebAudio API and exposing a simple and uniform interface so that anyone can easily build interactive synthesis into their web applications. The aiSound website, aisound.clound, has examples and demos, and all the code is on GitHub.

This is a little demo of the API for interactive sound on web pages using aisound. Click on the image, it's live!

I developed an on-line MOOC at Kadenze for the curious with no programming experience, Web Coding Fundamentals for Artists. The course and syllabus are at Kadenze, but here is the 2-minute promo vid:

I initated, directed, and now co-direct the Art/Science Residency Program which over the past 12 years has funded and brought 25 international artists for semester-long visits to the National University of Singapore. We find research labs from all over the University to host thier visits. Engagement between the various practice and knowledge cultures of researchers and artsts is the prime objective.

Italian artist, Maurizio Marinucci (aka TeZ) workign with students exploring the effect of electricity, magnetism, monchrome light, and sound on the life of plants.

This (2010) installation was part of an open series of musical events with audiences meandering in and out of the lobby and concert hall. D(Th)is Loca(mo)tion(!) stole sound from the concert hall from other composer's pieces, piped it to the lobby where guests swung pendula to create doppler-shifting effects. The results were streamed back to phones that people in the concert hall were encourged to listen to - an "altered" version of what they were simultaneously hearing from the stage.